Currently working on:

- Benchmarking against other monocular depth models (e.g., Apple Depth Pro, ZoeDepth, LeReS) on VRX/field clips

- Log-linear inverse-depth calibration with robust least squares (Huber/Tukey) to handle outliers/specular water

- Auto-calibration: collect LiDAR↔image correspondences and fit parameters via maximum-likelihood (MLE)

Monocular Depth Estimation on the WAM-V

This project benchmarks a calibrated monocular depth pipeline (Depth Anything V2) against LiDAR on the WAM-V platform, both in VRX (Virtual RobotX) Gazebo-based simulation and in field footage. The aim is to understand where monocular depth can replace or complement LiDAR for near-term navigation and docking, and to quantify how much calibration reduces scale/bias errors.

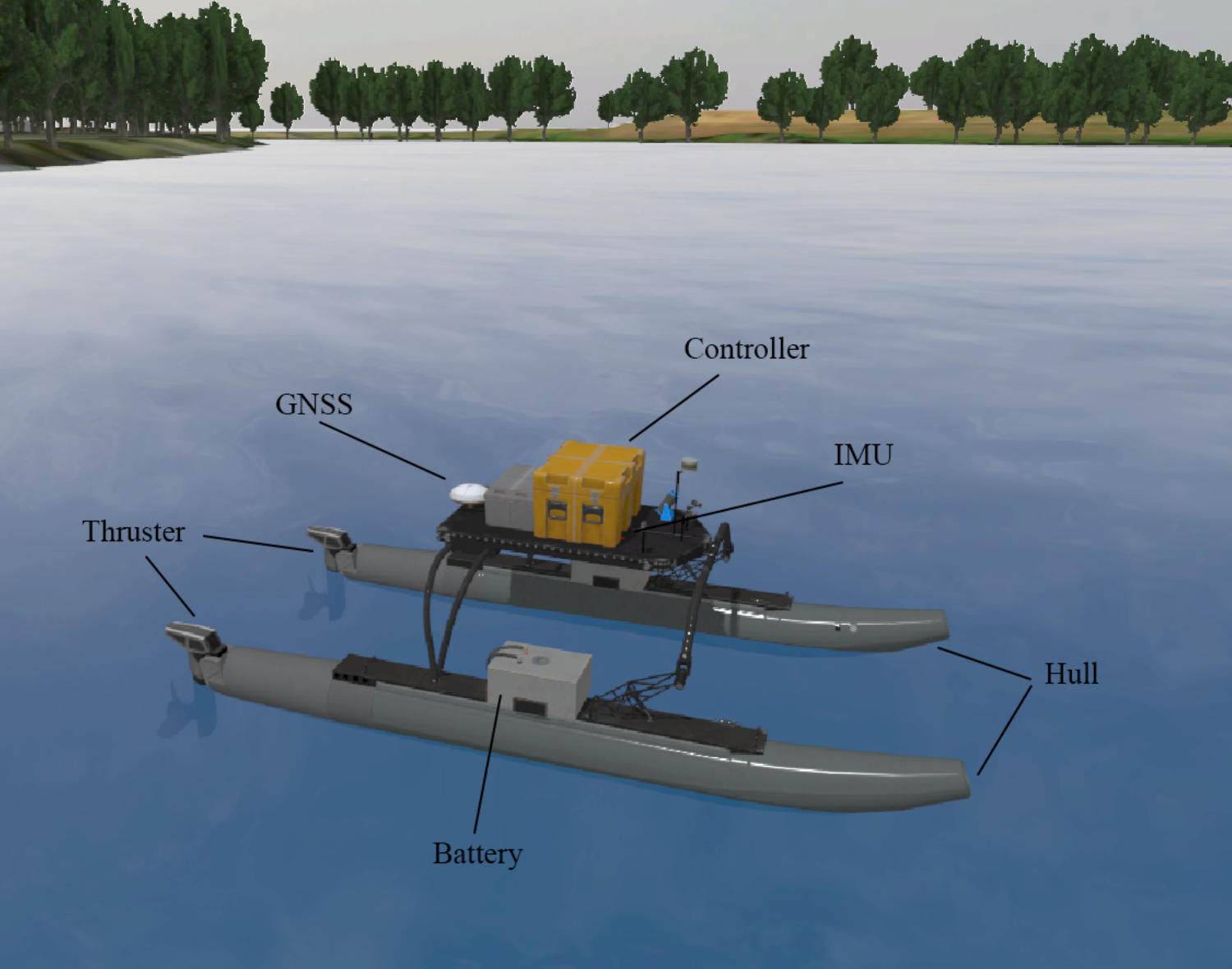

Introducing WAM-V & VRX

WAM-V (Wave Adaptive Modular Vessel) is a lightweight, catamaran-style ASV with modular mounting for sensors and compute. Its compliant pontoons filter wave energy, which helps keep sensors usable in choppy conditions while still reflecting realistic marine dynamics.

VRX (Virtual RobotX) is a Gazebo-based maritime simulator that mirrors the real RobotX challenges: navigation around buoys, station-keeping, docking, and signage interpretation. It provides controllable wind/wave fields, ground-truth trajectories, and standardized tasks so we can iterate in sim, benchmark perception, then transfer to field runs.

Depth Anything V2

Depth Anything V2 is a foundation model that predicts dense relative depth from a single RGB image. It’s trained across diverse scenes, which gives strong zero-shot performance and good boundary preservation—handy when the world is mostly water, rails, docks, and trailers. The catch: outputs aren’t in meters, and reflective water can confuse local patches.

Monocular models predict relative structure, not absolute distance. The raw output has an arbitrary dynamic range and differs per scene. A simple linear normalization (e.g., scaling between the 1st–99th percentile and mapping to [0,1]) helps visualization but does not recover true meters. Consequences:

- Far ranges get compressed; near objects appear stretched.

- Scale drifts between scenes, breaking consistency across runs.

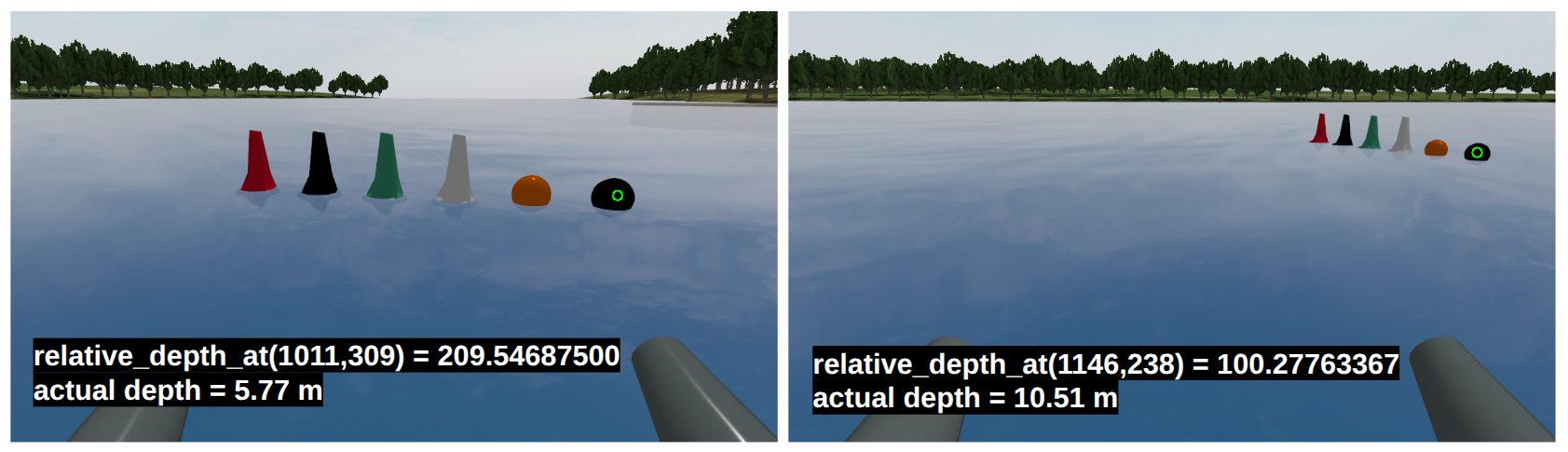

VRX Visualization (Relative Depth)

This clip shows Depth Anything V2 running on the WAM-V camera feed in the VRX simulator. The model’s output is visualized as a normalized color map to highlight relative structure: nearer regions appear “hotter,” farther regions “cooler.” The normalization is percentile-based (e.g., 2–98%) on each frame so the full colormap stays informative even as the scene changes. This is great for seeing edges (dock face, rails, buoys) and for confirming that the network preserves object boundaries on water.

VRX Projection — Linear Metric Mapping

After visualizing relative depth, I applied a simple linear mapping to convert the model’s output to a nominal metric scale and back-projected each pixel (RGB + depth) into 3D to publish a colored point cloud in RViz.

Model output: relative depth r in arbitrary units (higher values → closer).

Linear mapping (attempt): map r ∈ [0,1] to metric depth Z ∈ [zmin, zmax] using

Z = zmin + (1 − r) · (zmax − zmin)

This produces a dense, colored point cloud that looks locally plausible, but it exposes the limits of linear scaling.

- Scale mismatch across scenes: the same object lands at different depths depending on scene content.

- Far-range compression: distant structures bunch into thick, smeared planes.

- Near-range stretch: close objects expand in depth, inflating clearances.

Root cause: the model’s relative depth is not linearly related to true metric distance (it behaves more like inverse depth). A linear map cannot correct this, motivating a calibrated non-linear mapping (e.g., reciprocal or monotone spline) using a few LiDAR-referenced anchors.

Calibration — Non-Linear Metric Mapping

To recover metric depth, I calibrated the model’s relative depth against LiDAR. I projected LiDAR into the camera optical frame and sampled the depth at a small region of interest (ROI). At the same ROI, I read the model’s relative depth r. With matched pairs (r, z), I fit a simple inverse-depth curve.

Calibration setup:

• Fixed camera pose; reference targets in the 5–10 m range.

• Extracted relative depth at the same pixel locations from the model output.

• Extracted metric depth from LiDAR projected into the camera frame.

Fitted model:

Z(r) = 904.4 / r + 1.62

This non-linear mapping captures the inverse-depth behavior, reduces far-range compression, and stabilizes scale across scenes.

- Non-linear relation captured: relative depth is not linearly proportional to meters.

- Inverse-depth behavior: far distances expand appropriately; near distances don’t over-stretch.

- Improved geometry: dock planes thicken less; ranges align more closely with LiDAR.

Results — Post-Calibration

After applying the non-linear calibration, the metric depth looks substantially better. In RViz overlays, LiDAR points align closely with the calibrated Depth Anything V2 depth at corresponding pixels, and the water/ground plane appears much flatter—evidence that the scale is now well-behaved in meters.

- LiDAR agreement: Depth at sampled regions matches LiDAR within small residuals; edges (dock face, rails) line up cleanly.

- Flat, stable plane: The water/ground plane no longer “bows” or thickens with distance, indicating correct metric scaling.

- Far-range fidelity: Increasing the pixel stride (larger ROI sampling) reveals distant structures—like shoreline trees—projected with coherent depth, even at long range.

- Denser, useful maps: The colored point cloud is now metrically meaningful for planning near docks and trailers, with improved consistency across scenes.

Next Steps

I am planning on benchmarking against other mono depth estimation models and making calibration more robust/automatic.

- Model benchmarking: Compare calibrated Depth Anything V2 against other monocular models (e.g., Apple Depth Pro, ZoeDepth, LeReS) on identical VRX and field clips.

- Calibration model upgrade: Fit a log-linear inverse-depth mapping with robust least squares (Huber/Tukey) to handle outliers and specular water patches.

- Auto-calibration (MLE): Automatically gather LiDAR↔image correspondences, then maximize likelihood to estimate scale parameters (and per-scene bias) without manual targets.